No products in the cart.

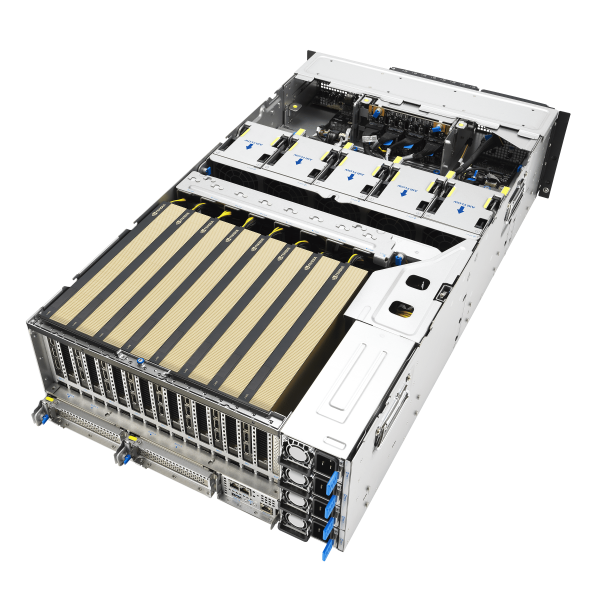

VRLA Tech AMD EPYC Server – 4U Rack

4U Rackmount GPU Server for AI, HPC, and LLM Workloads Unlock next-level…

Description

4U Rackmount GPU Server for AI, HPC, and LLM Workloads

Unlock next-level AI and high-performance computing with the VRLA Tech AMD EPYC Server – 4U Rack, designed from the ground up for massive GPU acceleration, dense compute, and modular scalability. Built to power the most demanding enterprise applications—such as LLM training, machine learning, virtualization, rendering, and scientific simulation—this server delivers cutting-edge performance with unmatched flexibility.

Ultimate GPU Compute Power in a High-Density 4U Chassis

Engineered to support up to 8 dual-slot, high-wattage GPUs, this server enables extreme computational throughput in a compact footprint. Whether you’re deploying NVIDIA H200 NVL GPUs or the NVIDIA RTX PRO 6000 Blackwell Server Edition, the system provides up to 1TB of combined GPU memory, empowering your infrastructure for large-scale AI model training, inferencing, and data-intensive HPC workflows.

– Supports up to 8x Dual-Width GPUs

– Each GPU rated up to 600W

– Fully compatible with NVIDIA NVLink and AMD Infinity Fabric interconnects

Powered by AMD EPYC 9005 Series Processors

At the heart of this platform is dual AMD EPYC 9005 CPUs, offering up to 192 Zen 5c cores across two sockets. With support for blazing-fast DDR5 6400MHz memory across 12 channels and PCIe Gen 5.0 throughout, this system is ready to handle the most intensive, memory- and compute-bound workloads.

– Up to 384 threads of CPU processing power

– DDR5 support for massive memory bandwidth

– PCIe Gen 5.0 lanes for high-speed I/O and GPU interconnects

Future-Proof with NVIDIA MGX Architecture

This AMD EPYC server features MGX compatibility, allowing modular and scalable AI infrastructure designs. With support for 160+ customizable configurations, the server adapts to your specific use case—whether that’s model training, simulation, multi-tenant virtualization, or edge AI inference.

– Industry-leading modular design for flexible deployments

– Scalable and future-ready architecture for evolving workloads

– Fast time-to-market and high ROI for data center expansion

Next-Generation Interconnect and Switching

This system utilizes the Broadcom PEX89000 series PCIe Gen 5.0 switches, offering up to 1,024Gbps of raw bandwidth per port. This ensures high-speed data transfer between GPUs, CPUs, and storage endpoints, making it ideal for latency-sensitive and compute-intensive applications.

– PCIe 5.0 with high-bandwidth switching

– Hyper-converged architecture optimized for AI/ML/DL workloads

– Industry-first bandwidth per port performance

Designed for AI, Deep Learning, and HPC

The VRLA Tech AMD EPYC Server is purpose-built for:

– Large Language Model (LLM) Training & Inference

– Enterprise AI & Deep Learning

– Scientific Computing & Simulation

– Virtual Machine Hosting & Virtualization

– Data Center & Cloud Infrastructure

– High-Performance Workstations & Research Labs

Additional information

| Weight | 40 lbs |

|---|---|

| Dimensions | 26 × 14 × 27 in |

Reviews

There are no reviews yet.