No products in the cart.

Purchase with confidence, 3 year warranty from the date of delivery, lifetime support

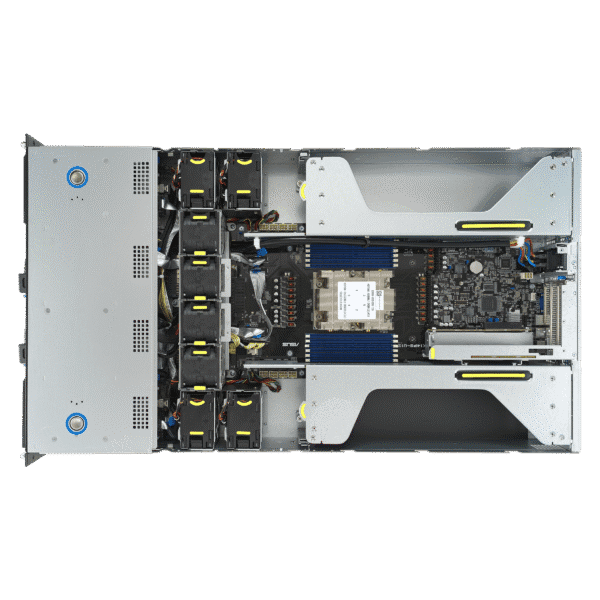

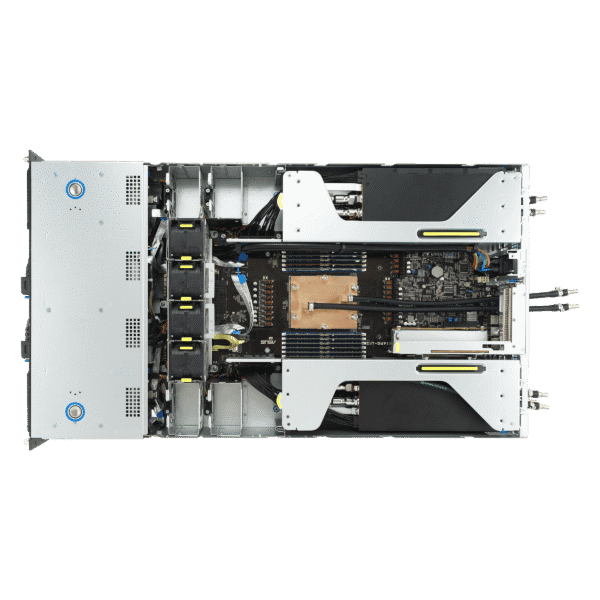

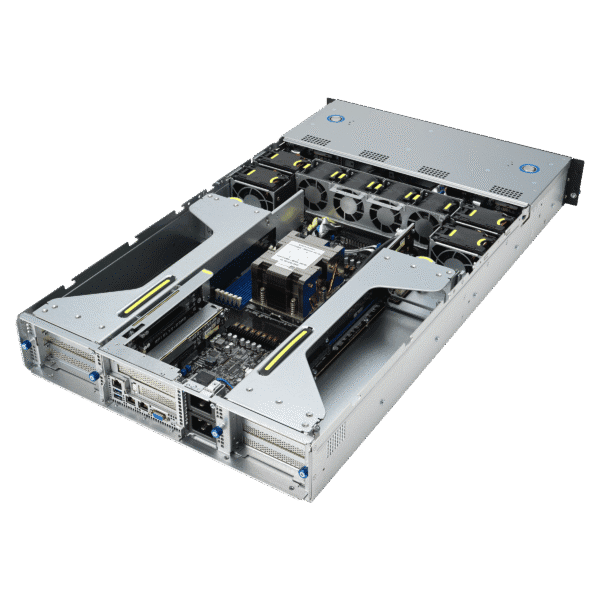

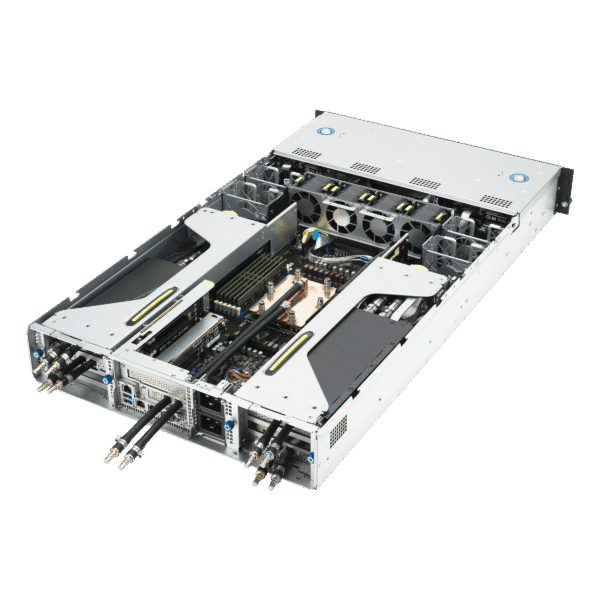

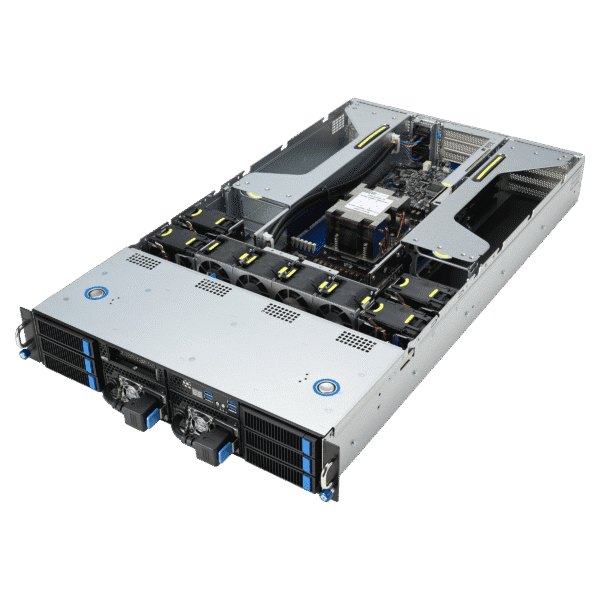

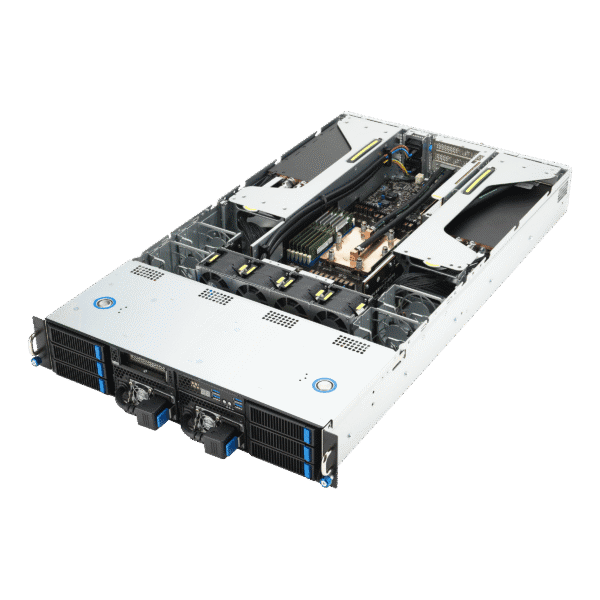

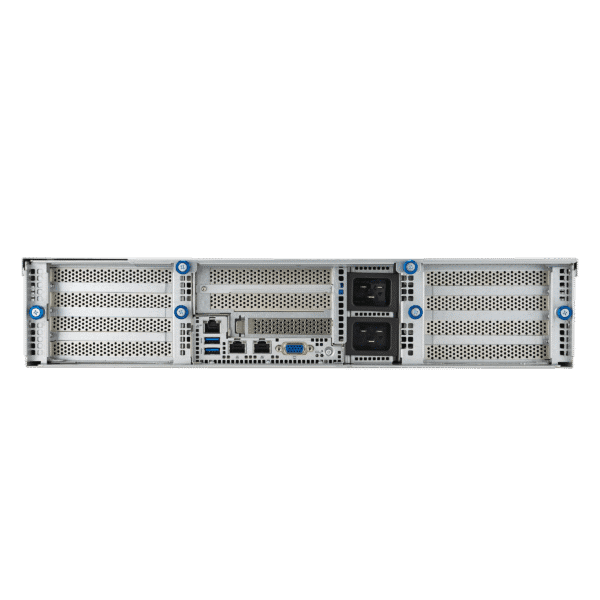

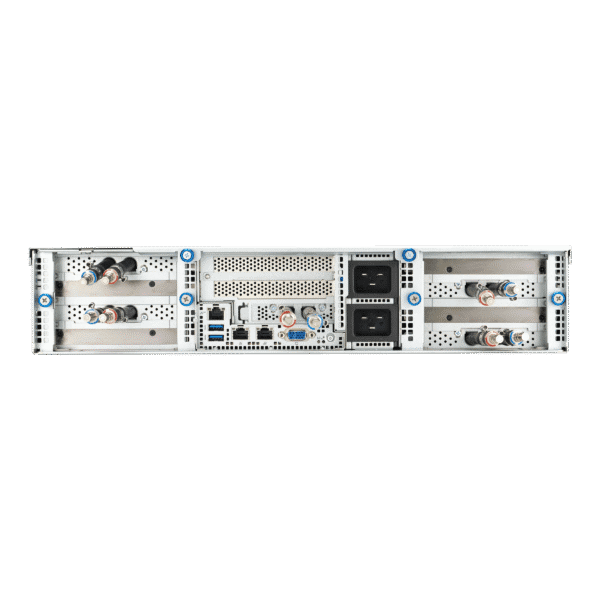

* The PC images represent various specs and upgrades. Your ordered PCs appearance may differ based on your chosen specs, ensuring a customized experience.

VRLA Tech AMD EPYC Server for AI Large Language Models (LLMs)

$55,949.96

Unlock Unparalleled Performance for AI Workloads The AMD EPYC Server for AI…

Description

Unlock Unparalleled Performance for AI Workloads

The AMD EPYC Server for AI Large Language Models is meticulously designed to meet the rigorous demands of modern artificial intelligence and deep learning workloads. Ideal for researchers, data scientists, and enterprises tackling complex AI-driven tasks, this 2U rack server delivers exceptional performance, scalability, and efficiency.

Powered by AMD EPYC 9004 Series Processors with up to 96 cores and multi-threading capabilities, this server offers the computational strength required to train and infer large-scale language models efficiently. With support for up to 4TB of DDR5 ECC RAM, it provides the high memory bandwidth needed to process massive datasets and handle parallel computations. The server also features PCIe Gen5 slots, enabling seamless integration with the latest GPUs, such as NVIDIA H100 or AMD Instinct accelerators, to maximize performance for AI training and inference.

Storage scalability is a core strength of this system, with support for up to 12 hot-swappable NVMe drives for ultra-fast data access and configurable RAID options for data redundancy and reliability. Advanced connectivity options, including dual 10GbE LAN ports and optional 25GbE or 100GbE NICs, ensure low-latency communication across distributed training setups. The 2U chassis is equipped with a high-efficiency thermal design, redundant fans, and dual 1600W 80+ Platinum power supplies to maintain peak performance, uptime, and energy efficiency.

Whether you’re training large language models like GPT and BERT, advancing AI research and development, or managing data-intensive applications, this server is the perfect solution. Built for mission-critical workloads, it offers the reliability and flexibility required to meet the demands of cutting-edge AI initiatives.

At VRLA Tech, we understand the unique challenges of AI and machine learning workloads. That’s why our AMD EPYC Servers are rigorously tested to ensure optimal performance and scalability. With customizable configurations, expert support, and competitive pricing, we are your trusted partner in powering tomorrow’s AI breakthroughs. Configure your AMD EPYC Server for AI Large Language Models today and unlock the future of innovation.

Additional information

| Weight | 40 lbs |

|---|---|

| Dimensions | 26 × 14 × 27 in |

Reviews (0)

Be the first to review “VRLA Tech AMD EPYC Server for AI Large Language Models (LLMs)” Cancel reply

You may also like

- VRLA Tech AMD Ryzen Threadripper WorkstationRated 5.00 out of 5$8,864.98

- VRLA Tech Intel Xeon WorkstationFrom: $10,999.99

- VRLA Tech AMD Ryzen Workstation$4,464.98

Reviews

There are no reviews yet.